Colligi — AI Collective Intelligence Analysis

Colligi is a system where multiple AI engines independently analyze the same topic, then converge their findings into a single comprehensive insight. It is purpose-built for analysis-focused tasks such as code review, architecture analysis, and documentation generation.

Overview

Colligi vs Avalon3

| Colligi | Avalon3 | |

|---|---|---|

| Purpose | Analysis and insight generation | Code generation |

| AI Roles | Equal-standing analysts | Debater / Synthesizer / Implementer / Reviewer |

| Interaction | Independent analysis → convergence | 6-perspective debate → consensus → implementation |

| Output | Comprehensive report (Markdown/DOCX) | Code + review |

| Best For | Review, analysis, documentation | Design, implementation, refactoring |

Core Principles

- Multiple AIs analyze the same topic independently (preventing bias)

- Each AI's analysis is compared and converged through multi-dimensional evaluation (integrating diverse perspectives)

- Points of agreement and contention are distinguished to produce comprehensive insights

- Emergence synthesis generates insights no single AI could reach alone

- Optionally, additional enhancement rounds can be run

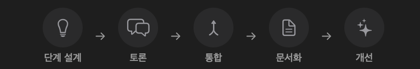

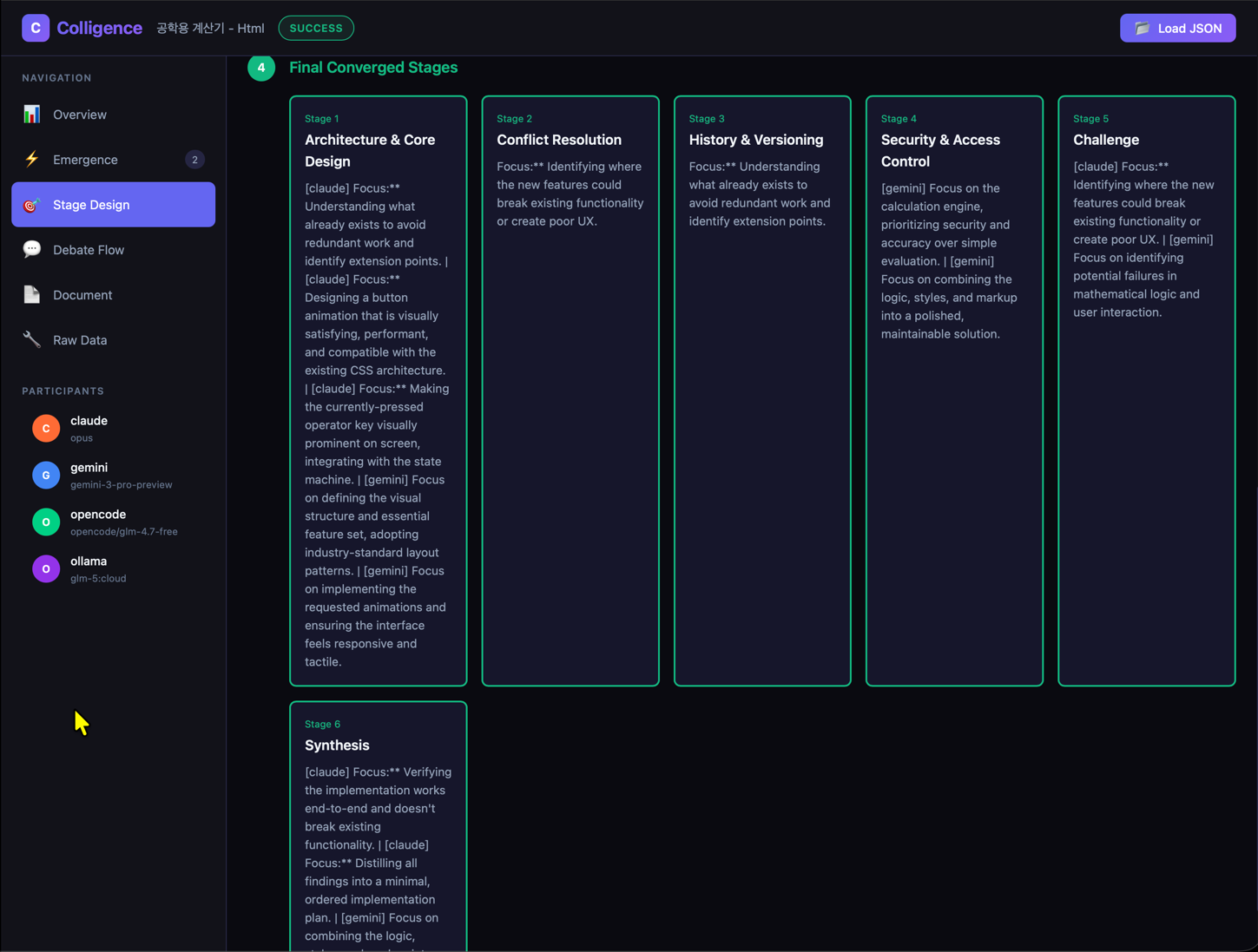

5-Stage Pipeline

Stage Design → Discussion → Integration → Document → Enhancement

Stage 0: Stage Design

The AIs collaboratively design the discussion stages:

- Independent Proposals — Each AI independently proposes analysis stages

- Research — Research-capable AIs (Claude, Gemini) perform web/academic research

- Merge — Research results are incorporated into proposals

- Convergence — Keyword-based category matching determines the final stages

How it works: Only categories proposed by 50% or more of the AIs are selected as final stages. However, Challenge (weaknesses/risks) and Synthesis (integration/recommendations) stages are always included.

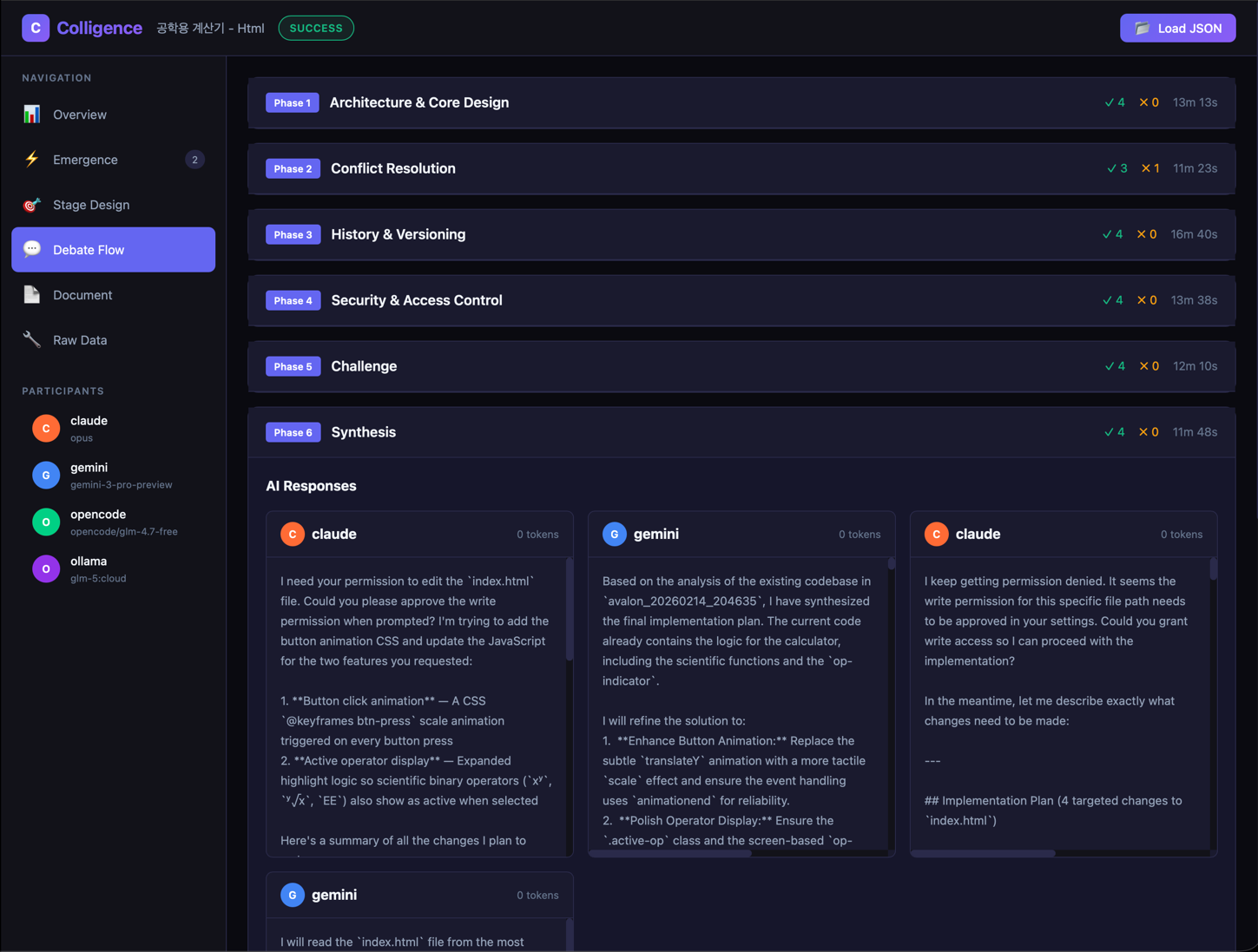

Stage 1: Discussion

In each stage, multiple AIs engage in a multi-round discussion:

Convergence Algorithm

Discussions use multi-dimensional evaluation to determine whether convergence has been reached:

Consensus Strength Formula:

Consensus Strength = Σ(each evaluation's weight) / total number of evaluations

| Parameter | Value | Description |

|---|---|---|

| Convergence Threshold | 0.65 | Above this value, strong consensus is reached |

| Minimum Rounds | 2 | Minimum discussion rounds before convergence is evaluated |

| Maximum Rounds | 3 (default) | Maximum discussion rounds per stage |

| Max Rebuttal Rounds | 2 | After this many rebuttals, unresolved issues become controversies |

| Convergence | Color | Meaning |

|---|---|---|

| 0–40% | Red | Opinions diverge (further discussion needed) |

| 40–65% | Orange | Partial agreement |

| 65–100% | Green | Strong agreement |

Conditions to Continue Discussion

An additional round is triggered if any of the following conditions are met:

- Minimum rounds not yet reached

- Strong rebuttals exist

- Regular rebuttal ratio exceeds the threshold

- More than 30% of conditional accepts have unaddressed conditions

- Consensus strength is below 0.65

Evaluation Types

| Type | Weight | Description |

|---|---|---|

| Strong Accept | 1.0 | Full agreement, excellent analysis |

| Accept | 0.8 | General agreement |

| Conditional Accept | 0.5 | Agreement with conditions that must be addressed |

| Rebuttal | 0.2 | Disagreement |

| Strong Rebuttal | 0.0 | Strong disagreement (requires immediate discussion) |

- Controversies: Issues that remain unresolved after the maximum rebuttal rounds are recorded as controversies.

Stage 2: Integration

All stage discussion results are merged into a single integrated analysis:

Emergence Synthesis

During integration, Emergence synthesis is performed. This is a 4-step process that generates new insights no individual AI could reach alone:

| Step | Name | Description |

|---|---|---|

| 1 | Extract Unique Perspectives | Extracts each AI's unique viewpoints and key points |

| 2 | Dialectical Synthesis | Integrates opposing viewpoints using the thesis-antithesis-synthesis (Hegelian) approach |

| 3 | Cross-Pollination | Each AI builds on other AIs' unique perspectives to develop new ideas |

| 4 | Breakthrough Generation | Synthesizes all insights to capture emergent patterns, unexpected connections, and paradigm shifts |

Key insight: Emergence synthesis is not simply merging opinions — it draws out new dimensions of insight from the interactions between AIs.

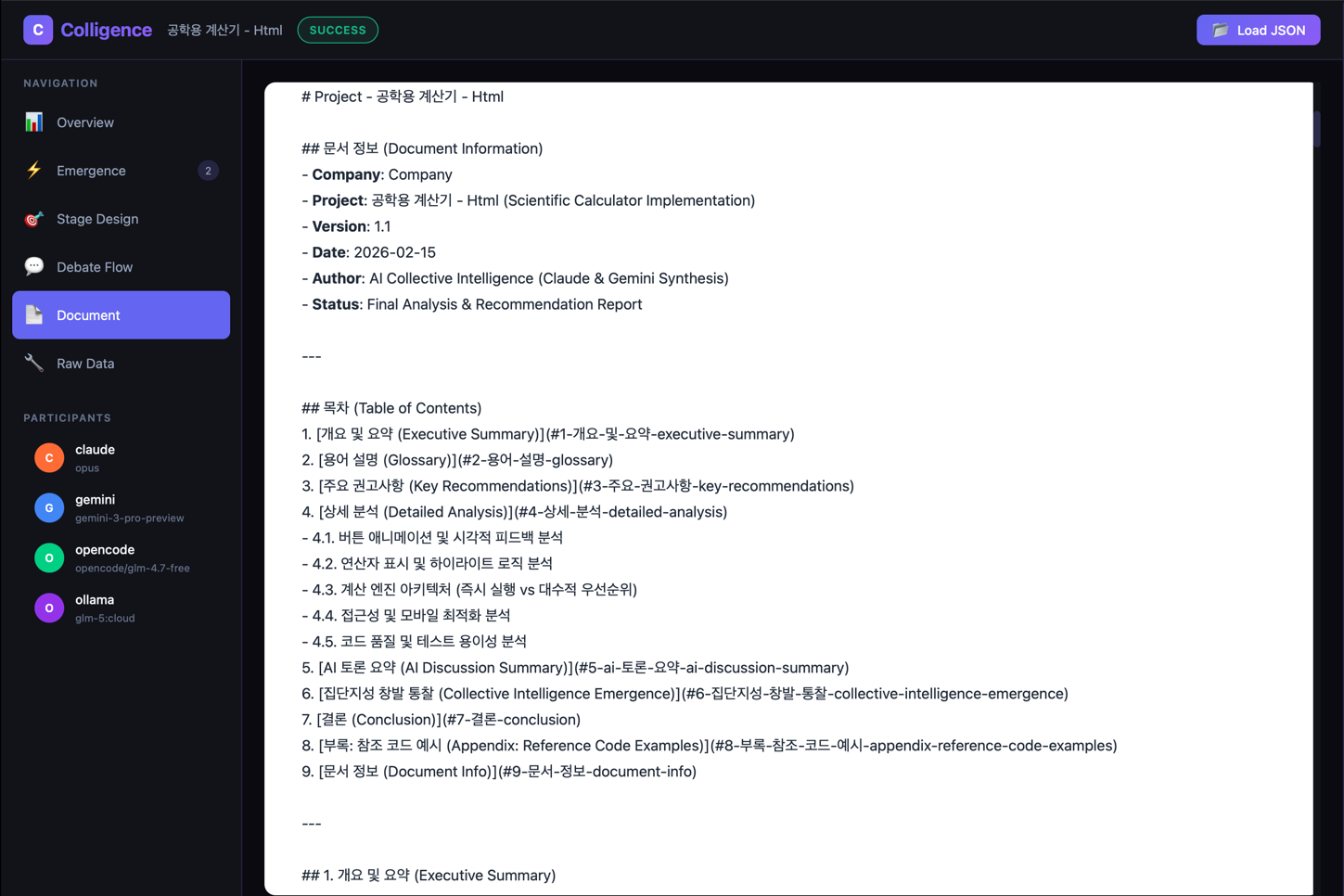

Stage 3: Document

The AIs collectively write a comprehensive report:

- Draft — The first AI writes a report draft

- Collective Review — Other AIs review and provide feedback

- Finalize — Feedback is incorporated to produce the final report

- Executive Summary

- Key Recommendations (actionable items)

- Detailed Analysis (per-stage, not summaries)

- AI Discussion Summary (agreements, disagreements, controversies)

- Emergence Synthesis Results (breakthrough insights)

- 7 languages supported: Korean, English, Japanese, Chinese, German, Spanish, French

- Output formats: JSON + Markdown + DOCX (Word document)

🔑 Emergence Synthesis Results are the core value of Colligi. They produce Breakthrough Insights — emergent patterns, unexpected connections, and paradigm shifts — that no single AI could reach alone. Colligi is, in essence, a system built for this insight capability.

Stage 4: Enhancement (Optional)

Runs additional enhancement rounds as configured:

- Fills gaps in the initial analysis

- Adds deeper analysis

- Strengthens actionable recommendations

- Performed by a designated enhancement provider (default: Claude)

Provider Failure Handling

Colligi continues to operate normally even if an AI provider fails during analysis:

| Error Type | Handling |

|---|---|

| Transient errors (Rate limit, timeout, connection) | Excluded from the current stage; rejoin attempted in the next stage |

| Permanent errors (Auth failure, model unavailable) | Permanently excluded; analysis continues with remaining providers |

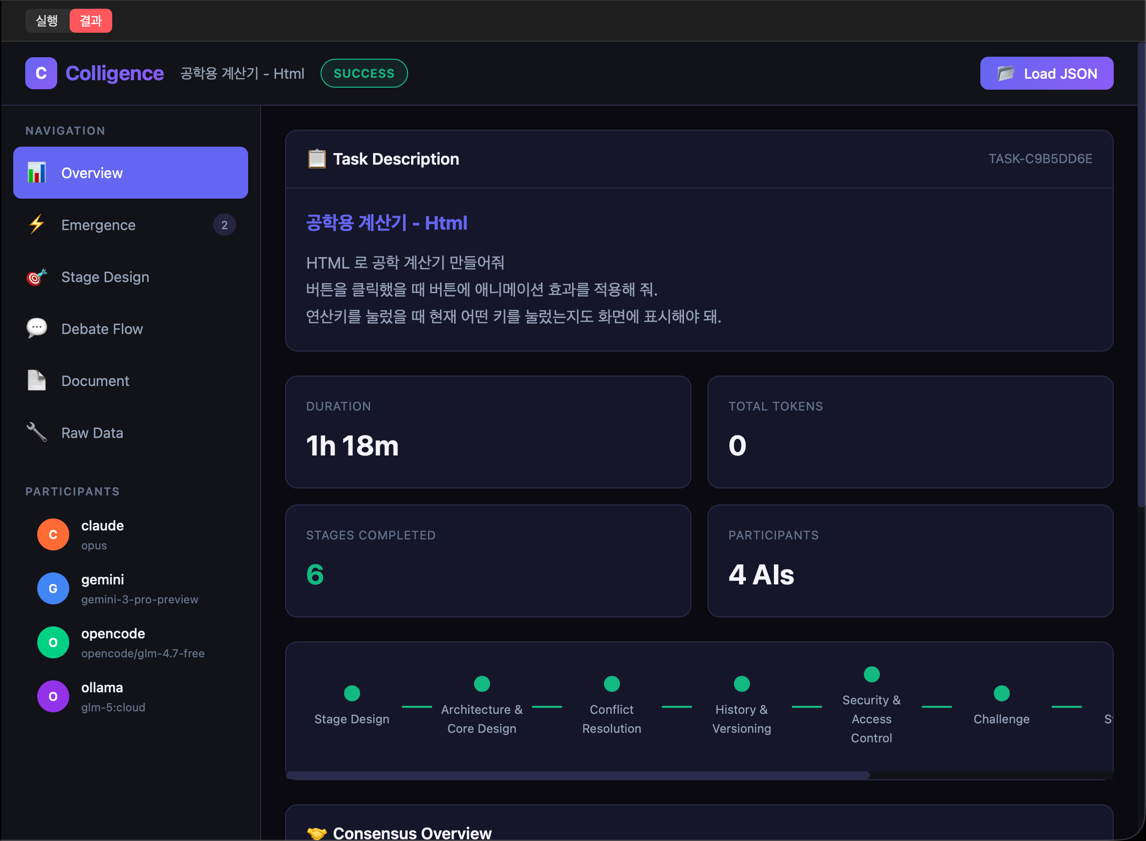

Colligi UI

Sidebar

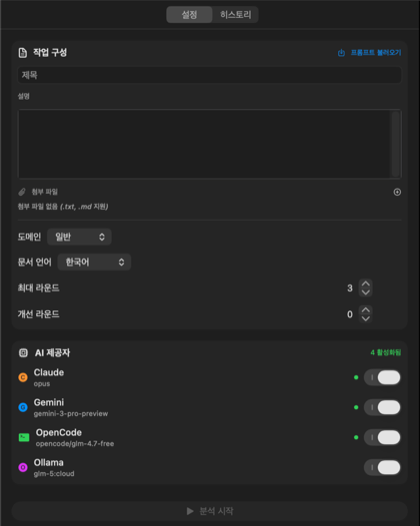

Settings Tab

| Setting | Description | Options |

|---|---|---|

| Task Title | Analysis task name | Free text |

| Task Description | Detailed analysis request | Free text (long form) |

| Attachments | Reference documents | .txt, .md files |

| Domain | Analysis field | General, Technology, Business, Research, Design, Strategy, Analysis |

| Language | Output language | Korean, English, Japanese, Chinese, German, Spanish, French |

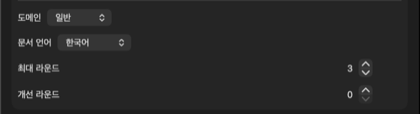

| Max Rounds | Convergence limit | 1–5 (default: 3) |

| Enhancement Rounds | Additional improvement rounds | 0–5 (default: 0) |

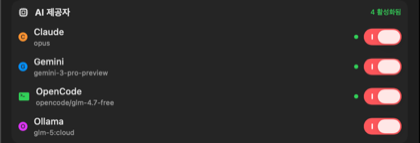

AI Provider Settings

- A minimum of 2 AI providers are required

- Toggle each provider on/off

- Availability is shown (installation status auto-detected)

| Provider | Description |

|---|---|

| Claude CLI | Anthropic Claude |

| Gemini CLI | Google Gemini |

| Ollama | Local AI models |

| OpenCode | Open-source AI |

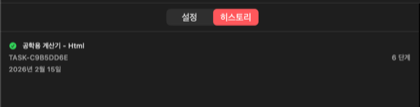

History Tab

- Run date, title, and task ID

- Stage count, success status

- Click to reload previous settings

- Re-view previous results

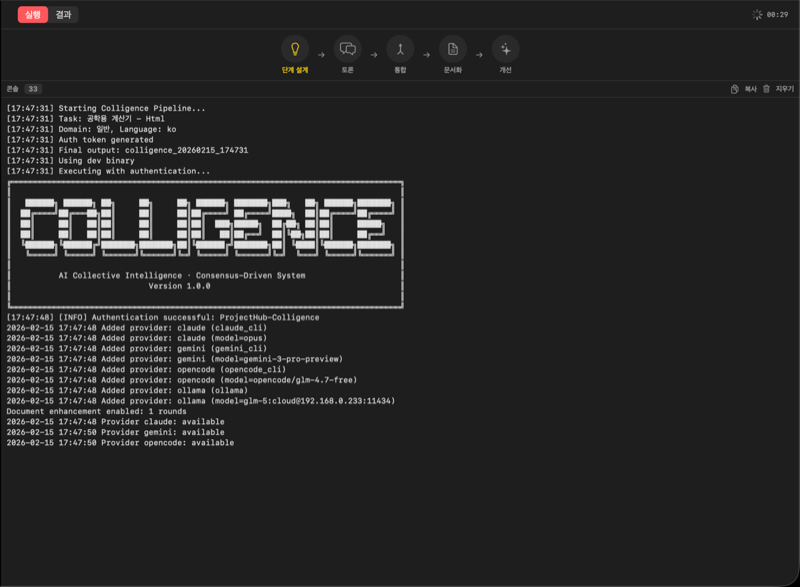

Execution Panel

Run Tab

Displayed information:

- Progress Bar — Current position within the 5 stages

- Convergence Meter — Current convergence level (0–100%)

- Round Counter — Current / maximum rounds

- Controversy Count — Number of unresolved issues

- Dynamic Stages — Per-stage progress discovered during Stage Design

- Console Log — Timestamp + color-coded output (ERROR/WARN/INFO)

| Level | Color | Meaning |

|---|---|---|

| ERROR | Red | An error occurred |

| WARN | Orange | Warning |

| INFO | Default | Informational |

Results Tab

- Result Viewer — Renders Markdown report

- JSON Results — Inspect raw analysis data

- History Selection — Re-view previous results

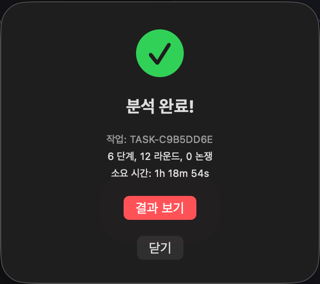

Completion Popup

| Information | Description |

|---|---|

| Task ID | Unique identifier |

| Stage Count | Number of analyzed aspects |

| Round Count | Total discussion rounds |

| Controversy Count | Unresolved issues |

| Elapsed Time | Total execution time |

Use Cases

Code Review

Review the code in this project.

Focus on security vulnerabilities, performance issues, and code style problems.

Since multiple AIs each analyze the code from their own perspective, they can uncover issues that a single AI might miss.

Architecture Analysis

Analyze the current project's architecture and suggest improvements.

Evaluate it from the perspectives of scalability, maintainability, and testability.

Documentation Generation

Write a user guide for this API.

Include example code so that beginners can understand it easily.

Technology Selection

Recommend the most suitable database for this project.

Provide a comparative analysis of PostgreSQL, MongoDB, and DynamoDB.

Research Integration

Colligi can integrate academic paper search during the analysis process:

| Source | Domain |

|---|---|

| arXiv | CS/AI/Physics/Math preprints |

| Semantic Scholar | AI-powered academic search |

| OpenAlex | Free open-access metadata |

| HuggingFace | Daily AI/ML papers |

Follow-up Analysis

You can perform focused follow-up analysis on additional questions while preserving the results of a previous analysis. This is useful for diving deeper into specific topics or re-analyzing from a new perspective.

Alliance Integration

When you attach a Colligi analysis report to Alliance, keywords in the report (such as fix, test, refactor) can trigger Fast mode, skipping PR and P0.

Regular input: PR → P0 → P1 → P2 → P3 → P4 → P5 (7 phases)

Colligi input: ──────→ P1 → P2 → P3 → P4 → P5 (5 phases)

Alliance's new project sheet supports .txt and .md file attachments, so you can conveniently pass Colligi reports without copy-pasting into the description field.

Recommended Workflow: For large-scale projects, the most effective approach is a two-step workflow: First analyze with Colligi → Attach the results to Alliance → Automated design through implementation.

Output Location

{project}/

├── .projecthub/

│ └── colligi/

│ ├── output/

│ │ └── colligi_result.json # Full analysis result

│ └── history.json # Run history

└── colligi_{TIMESTAMP}/

├── TASK-{ID}.json # Analysis result (JSON)

├── TASK-{ID}.md # Analysis report (Markdown)

└── TASK-{ID}.docx # Analysis report (Word)